Video Annotation: What Is It and How Automation Can Help

The Benefits of Automated Video Annotation for Your AI Models. Similar to image annotation, video annotation is a process that teaches computers to recognize objects. Both

The Benefits of Automated Video Annotation for Your AI Models

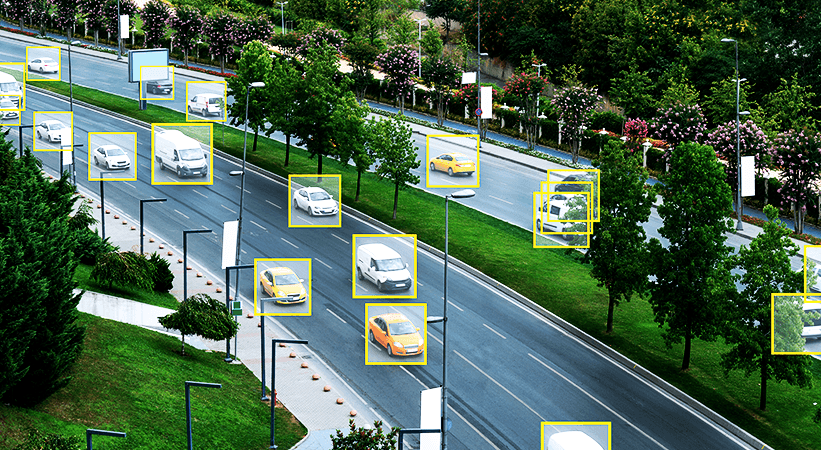

Similar to image annotation, video annotation is a process that teaches computers to recognize objects. Both annotation methods are part of the wider Artificial Intelligence (AI) field of Computer Vision (CV), which seeks to train computers to mimic the perceptive qualities of the human eye.In a video annotation project, a combination of human annotators and automated tools label target objects in video footage. An AI-powered computer then processes this labeled footage, ideally discovering through machine learning (ML) techniques how to identify target objects in new, unlabeled videos. The more accurate the video labels, the better the AI model will perform. Precise video annotation, with the help of automated tools, helps companies both deploy confidently and scale quickly.https://youtu.be/YIft9VtSpkQ

Video Annotation vs. Image Annotation

There are many similarities between video and image annotation. In our image annotation article, we covered the standard image annotation techniques, many of which are relevant when applying labels to video. There are notable differences between the two processes, however, that help companies decide which type of data to work with when they have the choice of one or the other.

Data

Video is a more complex data structure than image. However, in terms of information per unit of data, video offers greater insight. Teams can use it to not only identify an object’s position, but also whether that object is moving and in which direction. For instance, it’s unclear from an image if a person is in the process of sitting down or standing up. A video clarifies this.Video also can take advantage of information from previous frames to identify an object that may be partially obstructed. Image doesn’t have this ability. Taking these factors into account, video can produce more information per unit of data than an image.

Annotation Process

Video annotation has an added layer of difficulty compared to image annotation. Annotators must synchronize and track objects of varying states between frames. To make this more efficient, many teams have automated components of the process. Computers today can track objects across frames without need for human intervention and whole segments of video can be annotated with minimal human labor. The end result is that video annotation is often a much faster process than image annotation.

Accuracy

When teams use automation tools for video annotation, it reduces the chance for errors by offering greater continuity across frames. When annotating several images, it’s important to use the same labels for the same objects, but consistency errors are possible. When annotating video, a computer can automatically track one object across frames, and use context to remember that object throughout the video. This provides greater consistency and accuracy than image annotation, leading to greater accuracy in your AI model’s predictions.With the above factors accounted for, it often makes sense for companies to rely on video over images when choice is possible. Videos require less human labor and therefore less time to annotate, are more accurate, and provide more data per unit.

Video Annotation Techniques

Teams annotate video using one of two methods:

Single Image Method

Before automation tools became available, video annotation wasn’t very efficient. Companies used the single image method to extract all frames from a video and then annotate them as images using standard image annotation techniques. In a 30fps video, this would include 1,800 frames per minute. This process misses all of the benefits that video annotation offers and is as time-consuming and costly as annotating a large number of images. It also creates opportunities for error, as one object could be classified as one thing in one frame, and another in the next.

Continuous Frame Method

Today, automation tools are available to streamline the video annotation process through the continuous frame method. Computers can automatically track objects and their locations frame-by-frame, preserving the continuity and flow of the information captured. Computers rely on continuous frame techniques like optical flow to analyze the pixels in the previous and next frames and predict the motion of the pixels in the current frame.Using this level of context, the computer can accurately identify an object that’s present at the beginning of the video, disappears for several frames, and then returns later. If teams were to use the single image method instead, they might misidentify that object as a different object when it reappears later.This method is still not without challenges. Captured video, for example the footage used in surveillance, can be low resolution. To solve this, engineers are working to improve interpolation tools, such as optical flow, to better leverage context across frames for object identification.

Key Considerations in a Video Annotation Project

When implementing a video annotation project, what are the key steps you should take for success? An important consideration is the tools you select. To achieve the cost-savings of video annotation, it’s critical to use at least some level of automation. Many third parties offer video annotation automation tools that address specific use cases. Review your options carefully and select the tool or combination of tools that best suit your requirements.Another factor teams must pay attention to is your classifiers. Are these consistent throughout your video? Labeling with continuity will prevent the introduction of unneeded errors.Ensure you have enough training data to train your model with the accuracy you desire. The more labeled video data your AI model can process, the more precise it will be in making predictions about unlabeled data. Keeping these key considerations in mind, you’ll increase your likelihood of success in deployment.

Insight from Appen Video Annotation Expert, Tonghao Zhang

At Appen, we rely on our team of experts to help provide video annotation tools and services for our customers’ machine learning tools. Tonghao Zhang, the Senior Director of Product Management - Engineering Group, helps ensure our platform exceeds industry standards in providing high-quality video annotation. He comes from a background of big data & AI product management with 10+ years' experience building enterprise analytics platforms and AI solutions - especially around computer vision technology. Tonghao’s top insights when evaluating and fulfilling your video annotation needs include:

- Frame sampling strategy: evaluate how many frames per second you really need to extract from video. Think about your future strategy for model development. Make sure you have enough labeled frames for ground truth for both your current and future investments.

- Integrate a labeling tool: If you have a relatively matured model capability, don't miss the opportunity to boost the project efficiency and provide a testing ground for the existing model with our labeling tool.

- Ask for in-platform-review capabilities: You want to go through your results and provide feedback at an object-level. This enables you to go back to rework tasks with precise instructions regarding what to fix, if needed. Seamlessly refining your task instructions online will eventually save cost in terms of timing.

What Appen Can Do For You

At Appen, our data annotation experience spans over 25 years, over which time we have acquired advanced resources and expertise on the best formula for successful annotation projects. By combining our intelligent annotation platform, a team of annotators tailored for your projects, and meticulous human supervision by our AI crowd-sourcing specialists, we give you the high-quality training data you need to deploy world-class models at scale. Our text annotation, image annotation, audio annotation, and video annotation capabilities will cover the short-term and long-term demands of your team and your organization. Whatever your data annotation needs may be, our platform, our crowd, and managed services team are standing by to assist you in deploying and maintaining your AI and ML projects.Learn more about what annotation capabilities we have available to help you with your video annotation projects, or contact us today to speak with someone directly.